AquiLLM

AquiLLM (pronounced ah-quill-em) helps researchers manage, search, and interact with their research materials. The goal of AquiLLM is to enable teams to access and preserve their collective knowledge, and to enable new group members to get up to speed quickly.

About AquiLLM

- Research groups struggle to capture and retrieve knowledge distributed across team members.

- Much of this knowledge is informal—emails, notes, and conversations—and remains fragmented or undocumented.

- This constitutes tacit knowledge: experience-based expertise central to research practice.

- Accessing it is time-consuming and requires contextual familiarity.

- Existing RAG systems often do not address the necessary privacy of internal research materials.

- AquiLLM is an open-source, modular RAG-LLM system designed for research groups.

- It supports diverse data types and configurable privacy for both formal and informal knowledge.

Designed for Open-Weight Models and Local Systems

Supports local deployment using open-weight models, avoiding dependence on external APIs. This allows research groups to control data, model selection, and inference within their own computing environments.

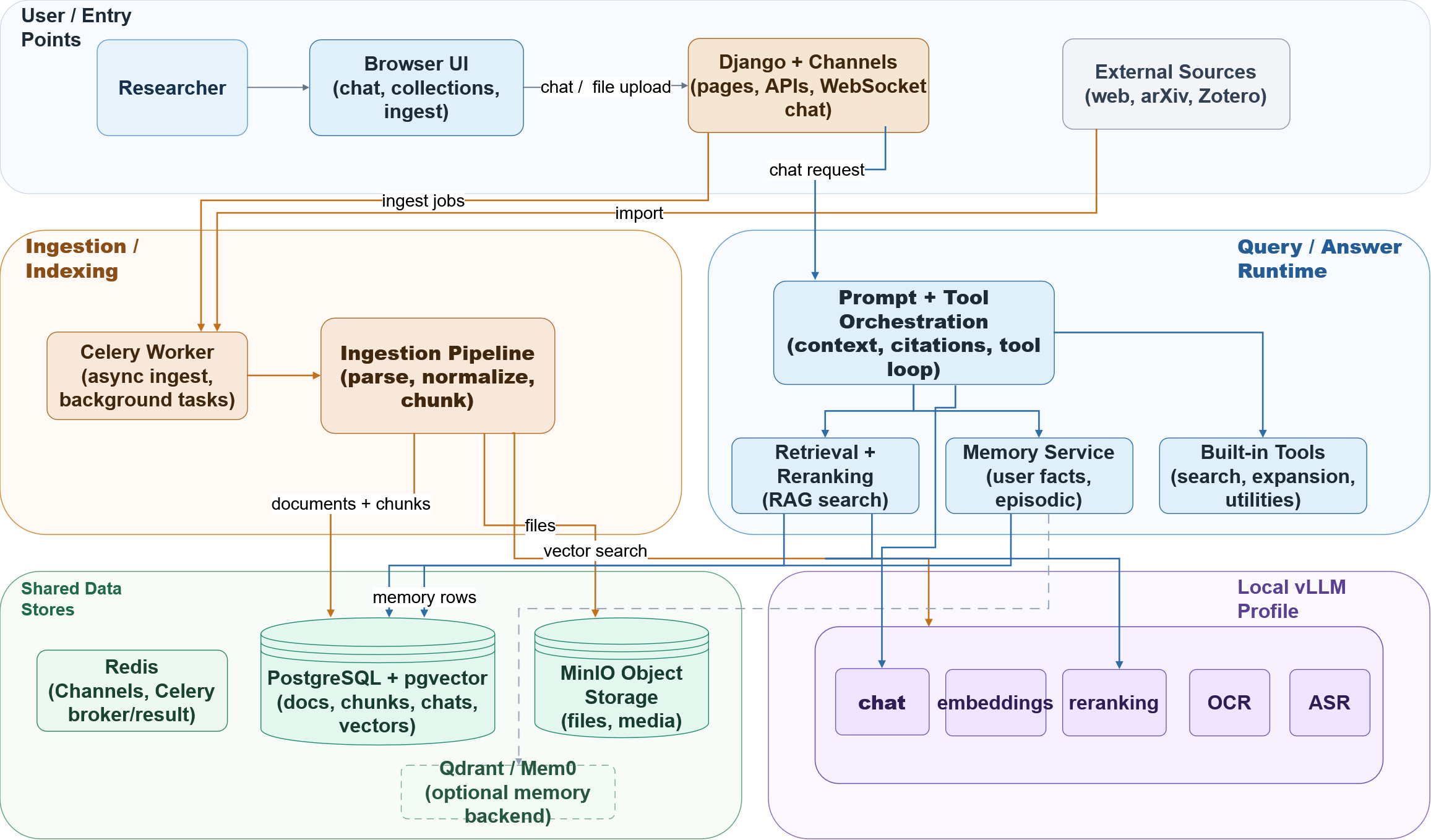

System Architecture

AquiLLM presents a modular architecture designed to address fundamental challenges in research knowledge management. Our system integrates document processing pipelines, information retrieval mechanisms, contextual memory systems, and locally-deployed language models to support scholarly inquiry and collaborative research workflows.

Research Publication

The methodological foundations, system implementation, and empirical evaluation of AquiLLM are detailed in our peer-reviewed research:

Campbell, C., Boscoe, B., & Do, T. (2024). AquiLLM: a RAG Tool for Capturing Tacit Knowledge in Research Groups. Proceedings of the US Research Software Engineer Association Conference (US-RSE 2025). arXiv:2508.05648

This work presents our approach to addressing knowledge management challenges in collaborative research environments through the development of a privacy-aware, locally-deployable retrieval-augmented generation system.

Contributors and Collaborators

Principal Investigators

- Prof. Bernie Boscoe (Southern Oregon University)

- Prof. Tuan Do (UCLA)

Developers

- Chandler Campbell

- Jack Stark

Contributors

- Amy Cheatle (Cornell University)

- Zhuo Chen (University of Washington)

- Andrew Lizarraga (UCLA)

- Jonathan Soriano (UCLA)

- Morgan Himes (UCLA)

- Srinath Saikrishnan (UCLA)

- Jacob Nowack (Southern Oregon University)

- Jackson Godsey (Southern Oregon University)

- Tee Grant (Southern Oregon University)

Former Contributors

- Skyler Acosta (Southern Oregon University)

- Kevin Donlon (Southern Oregon University)

- Elyjah Kiehne (Southern Oregon University)

About the Name

AquiLLM is a combination of the words "Aquila," the constellation, and "LLM," which stands for Large Language Model. Aquila is one of the most prominent constellations in the northern sky. The name reflects our group's history in working with Astronomy and Astronomers.

Alfred P. Sloan Foundation

Alfred P. Sloan Foundation

National Science Foundation

National Science Foundation